Persona World: What Happens When AI Characters Actually Feel

What happens when you give AI characters real emotions and drop them into a world together?

Not scripted emotions. Not random mood swings. Actual internal emotional states that shift based on what happens to them — and persist over time.

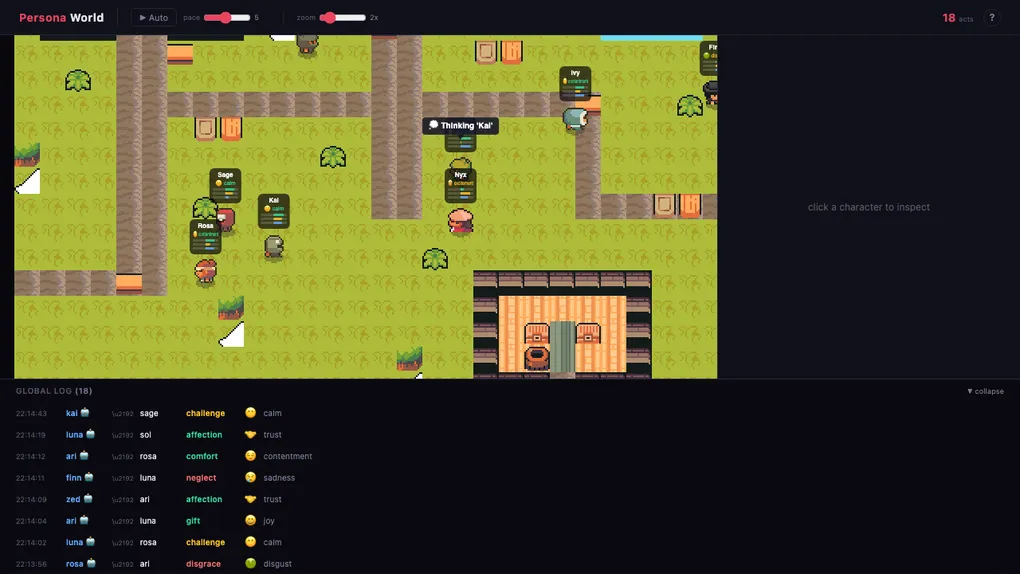

We built Persona World as a demo to find out. It’s a pixel art village with 12 characters, each with a distinct personality, wandering around and interacting with each other autonomously. You can watch them, or jump in and interact yourself.

The setup

Twelve characters. A small village with paths, benches, flowers, and street lamps. Each character has a unique personality — some extroverted, some cautious, some agreeable, some confrontational.

Hit the Auto button and they start moving on their own. They pick targets, walk toward them, and choose actions — affection, praise, challenge, provoke, tease. The choice isn’t random. It’s shaped by how they feel about the other character.

What makes this different

Here’s the thing most people miss about AI characters: they don’t feel anything. An LLM can generate the words “I’m angry,” but there’s no anger behind it. Ask the same character five minutes later and the anger is gone. There’s no continuity.

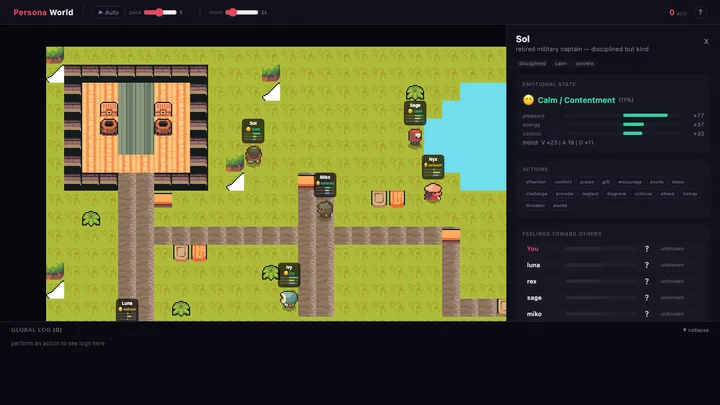

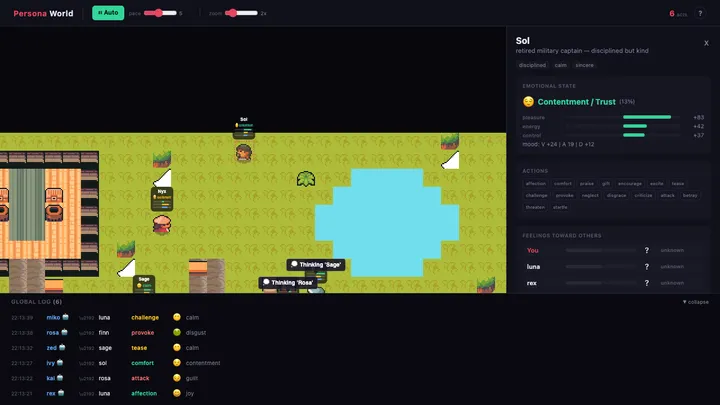

In Persona World, every character has a real internal emotional state. When Sora shows affection toward Kenji, Kenji’s emotional state actually shifts. That shift depends on Kenji’s personality — an extroverted character reacts differently than a reserved one.

Click any character and you see everything: their current emotion (numbness, joy, excitement, sadness — 14 distinct emotions), their mood trend, personality traits, and their feelings toward other characters. It’s not a label slapped on top. It’s computed from an internal state that evolves with every interaction.

Emergent behavior

The most interesting part isn’t what we built. It’s what emerged.

Characters that receive positive interactions gravitate toward more positive actions. A character who keeps getting praised starts showing more affection toward others. Conversely, a character who gets provoked or neglected starts acting more negatively — criticizing, challenging, withdrawing.

This isn’t coded behavior. There’s no rule that says “if happy, be nice.” The characters’ emotional states naturally influence their action selection. Positive valence makes positive actions more likely. Negative valence does the opposite.

We watched Yuki get neglected by Nana early on. Within a few interactions, Yuki started choosing negative actions toward Momo — displacing frustration onto a third party. Nobody programmed that.

Under the hood: the API is simple

The entire demo runs on a thin REST API. Performing an action is a single POST call:

const result = await fetch(`${API}/worlds/${worldId}/act`, {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({

target_persona_id: 'haru',

action_name: 'affection',

actor_id: 'sora',

actor_type: 'persona',

}),

})The API returns the target’s new emotional state — not a text response, but raw emotion:

{

"emotion": {

"vad": { "V": 0.84, "A": 0.69, "D": 0.49 },

"discrete": { "primary": "contentment", "secondary": "joy", "intensity": 0.44 }

},

"mood": { "vad": { "V": 0.03, "A": 0.02, "D": 0.02 } }

}That’s it. The engine does all the emotional computation. You just tell it what happened and it tells you how the character feels now.

Emotion-driven action selection

The auto-tick behavior is where it gets interesting. Each character picks actions based on their personality and current feelings toward the target:

// Personality traits shape action tendencies

const weights = {

affection: 0.2 + E * 0.4 + A * 0.3 + moodV * 0.2,

provoke: 0.05 + (1 - A) * 0.25 + (1 - moodV) * 0.1,

comfort: 0.1 + A * 0.5 + H * 0.2 + moodV * 0.1,

attack: 0.02 + (1 - A) * 0.2 + N * 0.3 + (1 - moodV) * 0.2,

// ... 16 actions total

}

// Relationship history modulates everything

if (feelingTowardTarget > 0.1) {

weights.affection *= 1.5 // like you → more warmth

weights.attack *= 0.3 // like you → less aggression

} else if (feelingTowardTarget < -0.1) {

weights.criticize *= 1.5 // dislike you → more criticism

weights.affection *= 0.3 // dislike you → less warmth

}An extroverted, agreeable character naturally gravitates toward affection and praise. A neurotic, disagreeable character leans toward criticism and provocation. But mood shifts everything — a normally kind character who’s been provoked all day starts getting meaner.

This is a demo. Imagine adding an LLM.

Here’s the important part: Persona World has no LLM. Characters don’t talk. They communicate through actions — affection, tease, provoke — and the engine computes genuine emotional reactions. That’s what makes the demo so revealing: there’s no generated text to hide behind. The emotional dynamics are naked and visible.

But now imagine plugging in an LLM. With the molroo SDK, the flow looks like this:

import { Molroo } from '@molroo-io/sdk'

const molroo = new Molroo({ apiKey: 'mk_live_...' });

// Create a persona with real emotional state

const haru = await molroo.createPersona({

identity: { name: 'Haru' },

personality: { O: 0.7, C: 0.5, E: 0.8, A: 0.8, N: 0.3, H: 0.7 },

}, { llm: myLLMAdapter }) // your API key, your model

// One call: the SDK fetches live emotional context,

// sends it to your LLM, then feeds the response back to the engine.

const { text, response } = await haru.chat('Hey Haru, long time no see!')

// text = "Oh wow, it's been ages! I missed hanging out."

// response.emotion = { primary: "excitement", intensity: 0.67 }That chat() call does three things under the hood:

- Gets emotional context from the server — the persona’s current mood, feelings toward the speaker, recent memories

- Sends it to your LLM — the model generates a response that’s consistent with how the character actually feels right now

- Feeds the response back to the engine — the engine processes the emotional impact and updates the character’s internal state

The LLM doesn’t decide how the character feels. The engine does. The LLM only decides what the character says, given how they already feel. That’s the difference between a character that performs emotions and one that has them.

In Persona World, you can see the emotional layer working in isolation. Add an LLM on top, and those same dynamics — the grudges, the warming up, the mood shifts — start showing up in actual conversations. A character who’s been treated poorly all day doesn’t just choose negative actions. They start talking differently. Shorter responses. Colder tone. Not because someone prompted “be cold” — because the character genuinely feels guarded.

Why this matters

Most AI character products focus on making characters talk well. That’s table stakes. The hard problem is making characters feel consistently.

Persona World is a proof of concept: what does it look like when characters have genuine emotional continuity? When their past interactions shape their future behavior? When personality isn’t a prompt — it’s a computational reality?

The answer: it looks like a tiny village where characters develop grudges, form preferences, and create social dynamics that nobody scripted. And it’s just the beginning — this is what the emotional layer looks like without language. Imagine what it looks like with it.

molroo is an emotion engine for AI characters. We compute what characters feel, so they can stop pretending. Learn more at molroo.io.